Let the Compiler Check Your Units -- Wu Yongwei

Mixing your units can be disastrous. Wu Yongwei takes a quick look at C++ unit libraries that can help keep everything in order.

Mixing your units can be disastrous. Wu Yongwei takes a quick look at C++ unit libraries that can help keep everything in order.

Let the Compiler Check Your Units

by Wu Yongwei

From the article:

I recently came across a C++ standard proposal P3045 [P3045R7], which aims to add physical units to C++. Curious, I looked into the existing unit libraries and went down quite a rabbit hole.

Type safety and user-defined literals

Before exploring these libraries, I was already somewhat familiar with the idea of ‘type safety’. I was also aware that user-defined literals (UDLs) [CppReference-1] allow creating literals of specific types with ease. Typical uses in the standard library include

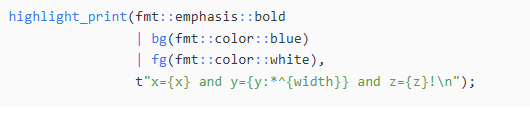

string/string_viewliterals and the chrono library [CppReference-2], which make code both convenient and safe.Figure 1 shows some simple examples.

auto msg = "Hello "s + user_name; auto t1 = chrono::steady_clock::now(); this_thread::sleep_for(500ms); auto t2 = chrono::steady_clock::now(); auto duration = t2 - t1; auto what = t1 + t2; // Can't compile cout << duration / 1.0ms; // To double, in ms

In today's post, I will explain one of C++'s biggest pitfalls:

In today's post, I will explain one of C++'s biggest pitfalls:  Even experienced C++ developers sometimes stumble on a deceptively simple question: what actually happens when a destructor throws an exception? This post breaks down the mechanics behind stack unwinding,

Even experienced C++ developers sometimes stumble on a deceptively simple question: what actually happens when a destructor throws an exception? This post breaks down the mechanics behind stack unwinding,